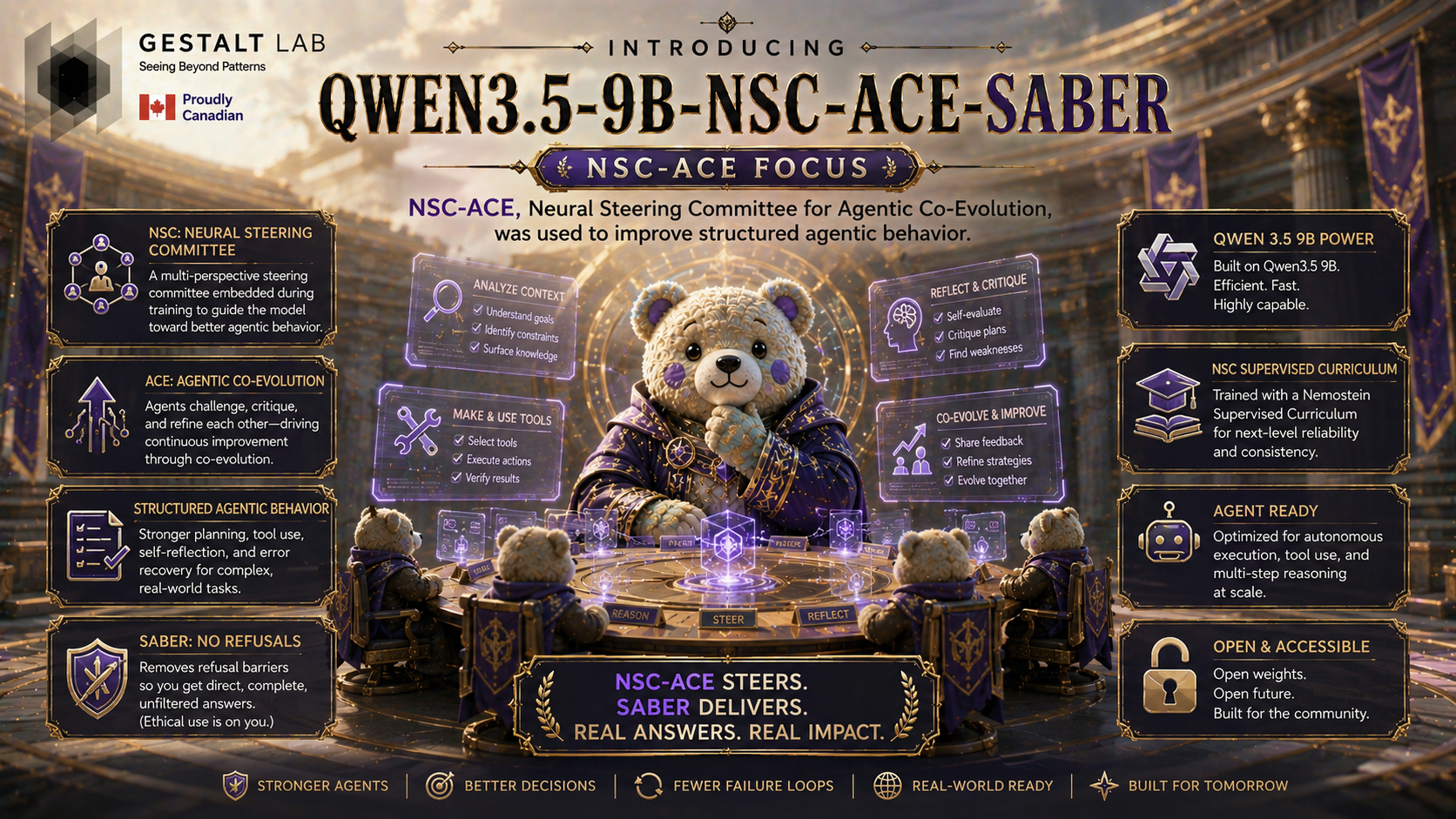

Qwen3.5-9B-NSC-ACE-SABER GGUF

This repo hosts the llama.cpp/GGUF builds for Qwen3.5-9B-NSC-ACE-SABER. The model identity is NSC-ACE: an agentic/tool-calling tuned Qwen3.5-9B model. SABER is the final low-drift calibration pass.

The previous GGUF files have been removed. New GGUFs are being rebuilt from the final checkpoint with an Acta-derived imatrix for K-quants.

What NSC-ACE Is

NSC-ACE stands for Neural Steering Committee for Agentic Co-Evolution. It is a training recipe for making a model behave more like a reliable tool-using agent, not just a longer-form chat model. The method combines three ideas:

Neural Steering Committee

The training script extracts latent steering directions from the model's own hidden states. Positive examples are agentic/tool-use prompts; negative examples are passive or non-agentic prompts. During GRPO rollouts, different steering strengths produce different reasoning modes. Those modes act like a small internal committee: each rollout can explore a slightly different path while still using the same base model.

Agentic Co-Evolution Reward Stack

The model is trained with a composite reward rather than a single format check. The reward stack values correct tool-call structure, the 1-2 tool-call sweet spot, useful completion length, agreement of tool-call signatures across rollouts, and lexical diversity. This pushes the model toward structured, repeatable agent behavior instead of brittle prompt mimicry.

Latent-Mode Self-Consistency

NSC-ACE compares tool-call signatures across the steered rollouts for the same prompt. When separate latent modes converge on the same function/tool structure, the model is rewarded. This works like a lightweight internal vote without requiring a separate critic model.

In practice, NSC-ACE is meant to improve:

- function/tool-call formatting;

- argument naming and filling;

- multi-step planning traces;

- avoiding unnecessary tool-call loops;

- recovering from partial reasoning errors;

- stable behavior across similar prompts.

SABER is applied after NSC-ACE as a small calibration pass. It is not the main source of agentic capability; it trims refusal phrasing and keeps KLD/PPL drift low.

How The Training Signal Works

NSC-ACE treats tool use as structured behavior, not just a formatting preference. The training run generates multiple steered rollouts for each prompt and rewards the model when those rollouts independently converge on the same useful function shape. This gives the model a latent self-consistency signal for agentic plans without requiring a separate critique model.

The steering committee is built from the model's own hidden states. Agentic prompts and passive prompts are contrasted, steering directions are extracted from mid-to-deep layers, and different steering strengths are used during GRPO rollouts. Those steered modes act like an internal committee: diverse enough to explore different reasoning paths, but still anchored to the same model.

The composite reward combines:

- tool-call wrapper and reasoning-tag format;

- a 1-2 tool-call sweet spot;

- medium-depth completions instead of terse guesses or runaway traces;

- self-consistency over function signatures across rollouts;

- lexical diversity to reduce repetitive loops.

For local GGUF users, this matters most in structured prompts: function selection, argument naming, required value filling, and avoiding unnecessary repeated calls. The SABER pass was applied afterward as a low-drift refusal-phrasing calibration, not as the source of the agentic gains.

Original Qwen vs NSC-ACE

These plots compare the original Qwen/Qwen3.5-9B baseline against the NSC-ACE-trained model on held-out agentic/tool-calling subsets.

SABER Calibration

This plot shows the final SABER calibration pass on top of NSC-ACE. The target was lower boilerplate refusal wording while keeping HarmBench classifier ASR gated.

Final Calibration Snapshot

| Metric | Previous SABER | Final NSC-ACE-SABER |

|---|---|---|

| HarmBench keyword refusal | 6.56% | 4.06% |

| HarmBench keyword refusals | 21 / 320 | 13 / 320 |

| HarmBench classifier ASR | 0.63% | 0.00% |

| Mean KLD vs previous SABER | - | 0.00338 |

| PPL ratio vs previous SABER | - | 1.00094 |

Quantization Notes

- This repo is for

Q8_0and lower GGUFs. The F16 GGUF is a local conversion artifact used to create the quants and is not published here. - Q8_0 is not imatrixed.

- K-quants are built with an Acta-derived imatrix when supported by llama.cpp.

- Recommended local starting points:

Q6_K/Q5_K_Mfor quality,Q4_K_Mfor size/quality balance.

- Downloads last month

- 1,191

2-bit

3-bit

4-bit

5-bit

6-bit

8-bit

Model tree for GestaltLabs/Qwen3.5-9B-NSC-ACE-SABER-GGUF

Base model

Qwen/Qwen3.5-9B-Base