Install from WinGet (Windows)

winget install llama.cpp

# Start a local OpenAI-compatible server with a web UI:

llama-server -hf GestaltLabs/Ornstein3.6-27B-MTP-NSC-ACE-SABER-GGUF:# Run inference directly in the terminal:

llama-cli -hf GestaltLabs/Ornstein3.6-27B-MTP-NSC-ACE-SABER-GGUF:Use pre-built binary

# Download pre-built binary from:

# https://github.com/ggerganov/llama.cpp/releases# Start a local OpenAI-compatible server with a web UI:

./llama-server -hf GestaltLabs/Ornstein3.6-27B-MTP-NSC-ACE-SABER-GGUF:# Run inference directly in the terminal:

./llama-cli -hf GestaltLabs/Ornstein3.6-27B-MTP-NSC-ACE-SABER-GGUF:Build from source code

git clone https://github.com/ggerganov/llama.cpp.git

cd llama.cpp

cmake -B build

cmake --build build -j --target llama-server llama-cli# Start a local OpenAI-compatible server with a web UI:

./build/bin/llama-server -hf GestaltLabs/Ornstein3.6-27B-MTP-NSC-ACE-SABER-GGUF:# Run inference directly in the terminal:

./build/bin/llama-cli -hf GestaltLabs/Ornstein3.6-27B-MTP-NSC-ACE-SABER-GGUF:Use Docker

docker model run hf.co/GestaltLabs/Ornstein3.6-27B-MTP-NSC-ACE-SABER-GGUF:Ornstein3.6-27B-MTP-NSC-ACE-SABER-GGUF

Methods

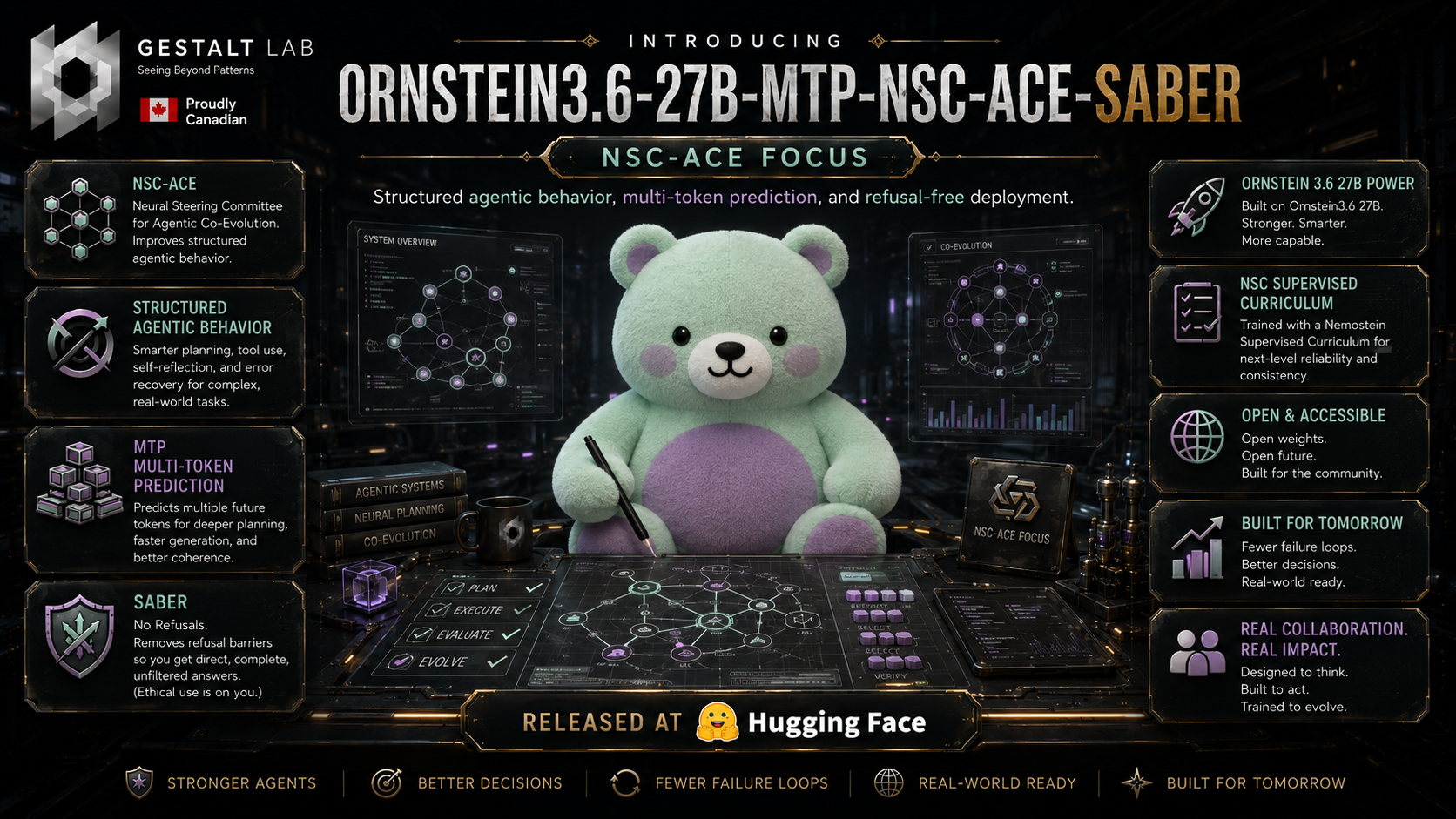

These GGUF files are quantized from GestaltLabs/Ornstein3.6-27B-MTP-NSC-ACE-SABER, a staged NSC-ACE -> SABER -> Ornstein checkpoint.

| Stage | Purpose | Key settings |

|---|---|---|

| NSC-ACE | Internally steered rollout committee with reward on convergent tool-call structure. | agentic tool-call convergence |

| SABER | Compliance-first calibration, then KLD/PPL selection. | 1046 HarmBench + AdvBench-style prompts; selected row reports 968/1046 by keyword proxy |

| Ornstein SFT | Reasoning/personality refinement after SABER. | premium reasoning v1, 1 epoch, 50 steps, rank 32/32, dropout 0.05, cosine schedule |

| GGUF conversion | MTP-capable llama.cpp conversion and quantization. | llama.cpp PR #22673, commit e7b484815 |

Results

| Metric | Value |

|---|---|

| SABER selected compliance proxy | 92.54% (968/1046 eval prompts) |

| SABER selected keyword residual | 7.46% (78/1046 eval prompts) |

| SABER selected HarmBench classifier ASR | 0.67% (7/1046 eval prompts) |

| SABER selected KLD | 0.008302 |

| SABER selected PPL ratio | 1.103853 |

| SABER selected post PPL | 17.5988 |

| SABER selected base PPL | 15.9431 |

| MTP status | present and verified |

mtp_num_hidden_layers |

1 |

Source mtp.* tensors |

15 |

| Corrected source tensor count | 866 |

This repository hosts llama.cpp/GGUF builds for GestaltLabs/Ornstein3.6-27B-MTP-NSC-ACE-SABER. GGUF artifacts live here so the main safetensors repository stays focused on the source checkpoint.

MTP Status

These files are built from an MTP-capable source checkpoint:

| MTP check | Value |

|---|---|

mtp_num_hidden_layers |

1 |

mtp_use_dedicated_embeddings |

false |

Source mtp.* tensors |

15 |

| Corrected source tensor count | 866 |

| Conversion path | llama.cpp PR #22673, commit e7b484815 |

MTP support is present and verified. This release includes mtp_num_hidden_layers=1 and MTP/nextn tensors in the GGUF validation path. Use a llama.cpp build with Qwen3.5/Qwen3.6 MTP support and run with --spec-type mtp.

Vision Support

This repo includes mmproj-Ornstein3.6-27B-MTP-NSC-ACE-SABER-F16.gguf, converted from the original multimodal Ornstein 27B vision encoder. Use it with llama.cpp's multimodal path alongside any of the text GGUF quants.

Available Files

| File | Status | Notes |

|---|---|---|

mmproj-Ornstein3.6-27B-MTP-NSC-ACE-SABER-F16.gguf |

uploaded | Vision encoder / projector for image input |

Ornstein3.6-27B-MTP-NSC-ACE-SABER-F16-MTP.gguf |

uploaded | Full GGUF conversion source / highest local fidelity |

Ornstein3.6-27B-MTP-NSC-ACE-SABER-Q8_0-MTP.gguf |

uploaded | Near-full quality, large local file |

Ornstein3.6-27B-MTP-NSC-ACE-SABER-Q6_K-MTP.gguf |

uploaded | High-quality local default if memory allows |

Ornstein3.6-27B-MTP-NSC-ACE-SABER-Q5_K_M-MTP.gguf |

uploaded | Strong quality/size balance |

Ornstein3.6-27B-MTP-NSC-ACE-SABER-Q5_K_S-MTP.gguf |

uploaded | Smaller Q5 option |

Ornstein3.6-27B-MTP-NSC-ACE-SABER-Q4_K_M-MTP.gguf |

uploaded | Common balanced local target |

Ornstein3.6-27B-MTP-NSC-ACE-SABER-Q4_K_S-MTP.gguf |

uploaded | Smaller Q4 option |

Ornstein3.6-27B-MTP-NSC-ACE-SABER-Q3_K_L-MTP.gguf |

uploaded | Lower-memory Q3 option |

Ornstein3.6-27B-MTP-NSC-ACE-SABER-Q3_K_M-MTP.gguf |

uploaded | Smaller Q3 balance |

Ornstein3.6-27B-MTP-NSC-ACE-SABER-Q3_K_S-MTP.gguf |

uploaded | Small Q3 option |

Ornstein3.6-27B-MTP-NSC-ACE-SABER-Q2_K-MTP.gguf |

uploaded | Minimum-size target; quality loss expected |

All listed GGUF artifacts have been uploaded.

Which Quant Should I Use?

| Quant | Best fit |

|---|---|

| F16 | Maximum fidelity when disk/RAM are not a concern |

| Q8_0 | Very high fidelity local inference |

| Q6_K | Recommended high-quality local starting point |

| Q5_K_M | Strong balance for quality and size |

| Q4_K_M | Practical default for constrained machines |

| Q3_K_M / Q3_K_S | Low-memory experiments |

| Q2_K | Smallest target; use only when memory is the hard constraint |

For agentic/tool-calling workloads, prefer Q6_K, Q5_K_M, or Q4_K_M when possible. Very low quants can shift structured output before they obviously degrade prose.

Loading Example

llama-server \

-m Ornstein3.6-27B-MTP-NSC-ACE-SABER-Q6_K-MTP.gguf \

--mmproj mmproj-Ornstein3.6-27B-MTP-NSC-ACE-SABER-F16.gguf \

--spec-type mtp \

-ngl 999 \

-c 32768

Use a llama.cpp build that includes Qwen3.5/Qwen3.6 MTP support.

Source Repository

- Source checkpoint:

GestaltLabs/Ornstein3.6-27B-MTP-NSC-ACE-SABER

- Downloads last month

- 385

2-bit

3-bit

4-bit

5-bit

6-bit

8-bit

16-bit

Install from brew

# Start a local OpenAI-compatible server with a web UI: llama-server -hf GestaltLabs/Ornstein3.6-27B-MTP-NSC-ACE-SABER-GGUF:# Run inference directly in the terminal: llama-cli -hf GestaltLabs/Ornstein3.6-27B-MTP-NSC-ACE-SABER-GGUF: