Jina AI commited on

Commit ·

2b230c9

0

Parent(s):

Initial public release

Browse files- .gitattributes +37 -0

- README.md +260 -0

- adapters/classification/adapter_config.json +39 -0

- adapters/classification/adapter_model.safetensors +3 -0

- adapters/clustering/adapter_config.json +39 -0

- adapters/clustering/adapter_model.safetensors +3 -0

- adapters/retrieval/adapter_config.json +39 -0

- adapters/retrieval/adapter_model.safetensors +3 -0

- adapters/text-matching/adapter_config.json +39 -0

- adapters/text-matching/adapter_model.safetensors +3 -0

- architecture.png +3 -0

- chat_template.jinja +154 -0

- config.json +101 -0

- config_sentence_transformers.json +8 -0

- custom_st.py +990 -0

- model.safetensors +3 -0

- modeling_jina_embeddings_v5_omni.py +616 -0

- modeling_llava_eurobert_audio.py +400 -0

- modules.json +8 -0

- preprocessor_config.json +24 -0

- processing_llava_eurobert.py +65 -0

- processor_config.json +68 -0

- tokenizer.json +3 -0

- tokenizer_config.json +23 -0

- video_preprocessor_config.json +29 -0

- vllm_jina_v5_omni.py +175 -0

- vllm_llava_eurobert_audio.py +889 -0

.gitattributes

ADDED

|

@@ -0,0 +1,37 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

architecture.png filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,260 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

pipeline_tag: sentence-similarity

|

| 3 |

+

tags:

|

| 4 |

+

- embedding

|

| 5 |

+

- jina-embeddings-v5

|

| 6 |

+

- feature-extraction

|

| 7 |

+

- sentence-transformers

|

| 8 |

+

- multimodal

|

| 9 |

+

- vision

|

| 10 |

+

- audio

|

| 11 |

+

- vllm

|

| 12 |

+

language:

|

| 13 |

+

- multilingual

|

| 14 |

+

inference: false

|

| 15 |

+

license: cc-by-nc-4.0

|

| 16 |

+

library_name: transformers

|

| 17 |

+

---

|

| 18 |

+

|

| 19 |

+

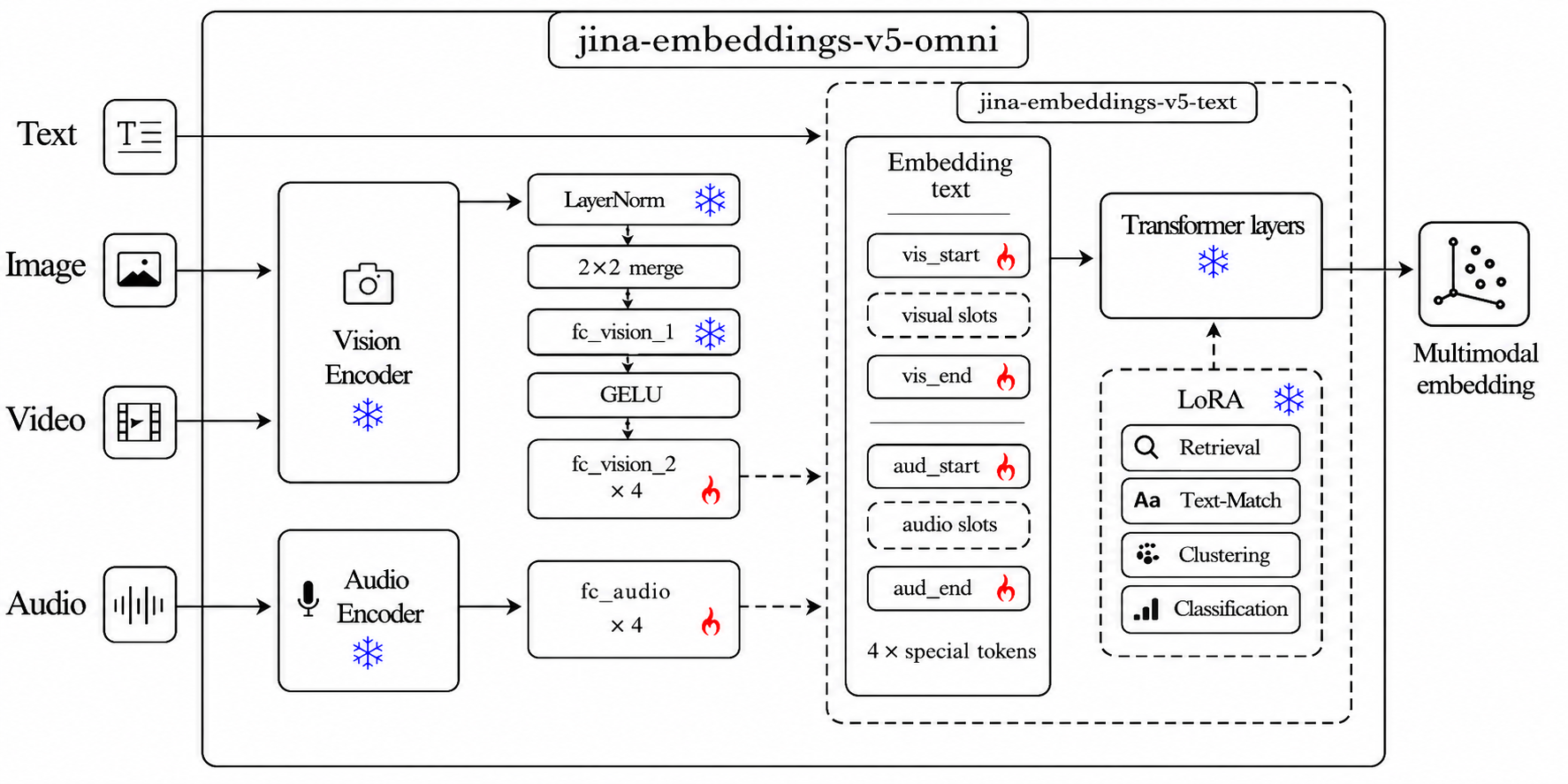

### **jina-embeddings-v5-omni-nano**: Multi-Task Omni Embedding Base (Nano)

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

### Model Overview

|

| 24 |

+

|

| 25 |

+

`jina-embeddings-v5-omni-nano` is a multimodal embedding model that accepts **text, images, video, and audio** and produces embeddings in a shared vector space aligned with the text-only [`jinaai/jina-embeddings-v5-text-nano`](https://huggingface.co/jinaai/jina-embeddings-v5-text-nano) — so you can index with text and query with any modality, no reindexing.

|

| 26 |

+

|

| 27 |

+

This is the **base** repository — it holds all task adapters (retrieval, classification, clustering, text-matching). For a single task, pre-merged task-specific variants are also available:

|

| 28 |

+

- [`jinaai/jina-embeddings-v5-omni-nano-classification`](https://huggingface.co/jinaai/jina-embeddings-v5-omni-nano-classification)

|

| 29 |

+

- [`jinaai/jina-embeddings-v5-omni-nano-clustering`](https://huggingface.co/jinaai/jina-embeddings-v5-omni-nano-clustering)

|

| 30 |

+

- [`jinaai/jina-embeddings-v5-omni-nano-retrieval`](https://huggingface.co/jinaai/jina-embeddings-v5-omni-nano-retrieval)

|

| 31 |

+

- [`jinaai/jina-embeddings-v5-omni-nano-text-matching`](https://huggingface.co/jinaai/jina-embeddings-v5-omni-nano-text-matching)

|

| 32 |

+

|

| 33 |

+

| Feature | Value |

|

| 34 |

+

| --- | --- |

|

| 35 |

+

| Parameters | ~1.04B |

|

| 36 |

+

| Embedding Dimension | 768 |

|

| 37 |

+

| Supported Tasks | `retrieval`, `classification`, `clustering`, `text-matching` |

|

| 38 |

+

| Max Sequence Length | 8192 |

|

| 39 |

+

| Pooling Strategy | Last-token |

|

| 40 |

+

| Supported Inputs | text, image, video, audio |

|

| 41 |

+

| Supported File Types | images: `.jpg`, `.jpeg`, `.png`, `.gif`, `.webp`, `.bmp`, `.tif`, `.tiff`, `.avif`, `.heic`, `.svg`; video: `.mp4`, `.avi`, `.mov`, `.mkv`, `.webm`, `.flv`, `.wmv`; audio: `.wav`, `.mp3`, `.flac`, `.ogg`, `.m4a`, `.opus`; documents: `.pdf` |

|

| 42 |

+

|

| 43 |

+

### Install

|

| 44 |

+

|

| 45 |

+

```bash

|

| 46 |

+

# core

|

| 47 |

+

pip install transformers torch pillow numpy

|

| 48 |

+

|

| 49 |

+

# Optional — install only the extras for the modalities you actually use:

|

| 50 |

+

pip install librosa soundfile # audio decoding

|

| 51 |

+

pip install av imageio # video decoding (pure-Python, no codec daemon)

|

| 52 |

+

pip install pdf2image pypdfium2 # PDF rendering

|

| 53 |

+

pip install cairosvg pillow # SVG rendering

|

| 54 |

+

pip install "vllm==0.20.1" # high-throughput serving (validated)

|

| 55 |

+

pip install sentence-transformers # one-call multimodal API

|

| 56 |

+

```

|

| 57 |

+

|

| 58 |

+

For minimum versions see the Requirements section below (transformers >= 4.57, torch >= 2.5; vLLM path validated with vllm == 0.20.1).

|

| 59 |

+

|

| 60 |

+

### Quickstart

|

| 61 |

+

|

| 62 |

+

```python

|

| 63 |

+

from PIL import Image

|

| 64 |

+

import librosa, torch

|

| 65 |

+

from transformers import AutoModel, AutoProcessor, WhisperFeatureExtractor

|

| 66 |

+

|

| 67 |

+

repo = "jinaai/jina-embeddings-v5-omni-nano"

|

| 68 |

+

model = AutoModel.from_pretrained(repo, trust_remote_code=True, default_task="retrieval").eval()

|

| 69 |

+

proc = AutoProcessor.from_pretrained(repo, trust_remote_code=True)

|

| 70 |

+

|

| 71 |

+

# model.embed(**inputs) returns L2-normalized last-token embeddings.

|

| 72 |

+

t_vec = model.embed(**proc(text="Query: Which planet is known as the Red Planet?", return_tensors="pt").to(model.device))

|

| 73 |

+

i_vec = model.embed(**proc(images=Image.open("photo.jpg"), text="<image>", return_tensors="pt").to(model.device))

|

| 74 |

+

v_vec = model.embed(**proc(videos="clip.mp4", text="<image>", return_tensors="pt").to(model.device))

|

| 75 |

+

|

| 76 |

+

# Audio has no string placeholder — build token ids from config.

|

| 77 |

+

audio, _ = librosa.load("speech.wav", sr=16000)

|

| 78 |

+

feat = WhisperFeatureExtractor(feature_size=128)(audio, sampling_rate=16000, return_tensors="pt")["input_features"]

|

| 79 |

+

cfg = model.config

|

| 80 |

+

n = feat.shape[-1] // 4

|

| 81 |

+

ids = torch.tensor([[cfg.audio_start_token_id, *[cfg.audio_token_id]*n, cfg.audio_end_token_id]])

|

| 82 |

+

a_vec = model.embed(

|

| 83 |

+

input_ids=ids.to(model.device),

|

| 84 |

+

attention_mask=torch.ones_like(ids).to(model.device),

|

| 85 |

+

input_features=feat.to(model.device, dtype=next(model.parameters()).dtype),

|

| 86 |

+

)

|

| 87 |

+

```

|

| 88 |

+

|

| 89 |

+

For retrieval, use `encode_query()` for query-side embeddings and `encode_document()` for document-side embeddings. A bare `encode(text)` call does not know which retrieval side you intended.

|

| 90 |

+

|

| 91 |

+

No `dtype`, `device`, `min_pixels`, or custom pooling code needed — sensible defaults live in the model config (bf16 weights, 256–1280 vision tokens).

|

| 92 |

+

|

| 93 |

+

<details>

|

| 94 |

+

<summary>Requirements</summary>

|

| 95 |

+

|

| 96 |

+

- `transformers>=4.57` (recommend >=5.1 for the small variants)

|

| 97 |

+

- `torch>=2.5`

|

| 98 |

+

|

| 99 |

+

Optional:

|

| 100 |

+

- `sentence-transformers` — one-call API for all 4 modalities

|

| 101 |

+

- `librosa` — audio decoding

|

| 102 |

+

- `av` — video decoding (`pip install av`)

|

| 103 |

+

- `vllm==0.20.1` — high-throughput serving; H100 deployments may also need DeepGEMM installed for vLLM FP8 kernels

|

| 104 |

+

|

| 105 |

+

</details>

|

| 106 |

+

|

| 107 |

+

### Selective Modality Loading

|

| 108 |

+

|

| 109 |

+

By default all components (vision + audio towers + text encoder) are loaded.

|

| 110 |

+

To save memory, pick a subset — the unused towers are skipped at load time:

|

| 111 |

+

|

| 112 |

+

```python

|

| 113 |

+

from transformers import AutoModel

|

| 114 |

+

|

| 115 |

+

AutoModel.from_pretrained("jinaai/jina-embeddings-v5-omni-nano", trust_remote_code=True, modality="omni") # all (default)

|

| 116 |

+

AutoModel.from_pretrained("jinaai/jina-embeddings-v5-omni-nano", trust_remote_code=True, modality="vision") # vision + text

|

| 117 |

+

AutoModel.from_pretrained("jinaai/jina-embeddings-v5-omni-nano", trust_remote_code=True, modality="audio") # audio + text

|

| 118 |

+

AutoModel.from_pretrained("jinaai/jina-embeddings-v5-omni-nano", trust_remote_code=True, modality="text") # text only

|

| 119 |

+

```

|

| 120 |

+

|

| 121 |

+

Same parameter works via `sentence-transformers`:

|

| 122 |

+

|

| 123 |

+

```python

|

| 124 |

+

SentenceTransformer("jinaai/jina-embeddings-v5-omni-nano", trust_remote_code=True, model_kwargs={"modality": "vision"})

|

| 125 |

+

```

|

| 126 |

+

|

| 127 |

+

### Via sentence-transformers

|

| 128 |

+

|

| 129 |

+

```python

|

| 130 |

+

from sentence_transformers import SentenceTransformer

|

| 131 |

+

|

| 132 |

+

# Base repo holds all 4 task adapters — pick one at load time.

|

| 133 |

+

model = SentenceTransformer(

|

| 134 |

+

"jinaai/jina-embeddings-v5-omni-nano",

|

| 135 |

+

trust_remote_code=True,

|

| 136 |

+

model_kwargs={"default_task": "retrieval"},

|

| 137 |

+

)

|

| 138 |

+

|

| 139 |

+

# URLs, local paths (with or without extension), PIL.Image, np.ndarray,

|

| 140 |

+

# torch.Tensor, bytes, and BytesIO are all accepted directly.

|

| 141 |

+

q_vec = model.encode_query("Which planet is known as the Red Planet?")

|

| 142 |

+

d_vec = model.encode_document("Mars is often referred to as the Red Planet due to its reddish appearance.")

|

| 143 |

+

i_vec = model.encode("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/tasks/car.jpg")

|

| 144 |

+

v_vec = model.encode("https://huggingface.co/datasets/raushan-testing-hf/videos-test/resolve/main/sample_demo_1.mp4") # needs `pip install av`

|

| 145 |

+

a_vec = model.encode("https://huggingface.co/datasets/Narsil/asr_dummy/resolve/main/mlk.flac") # needs `pip install librosa soundfile`

|

| 146 |

+

|

| 147 |

+

# Fused multimodal — a tuple becomes ONE embedding in a single forward pass:

|

| 148 |

+

emb = model.encode(("Winter boots, waterproof leather upper",

|

| 149 |

+

"https://.../boot.jpg",

|

| 150 |

+

"https://.../boot.mp4"))

|

| 151 |

+

```

|

| 152 |

+

|

| 153 |

+

For non-retrieval tasks (classification / clustering / text-matching), reload

|

| 154 |

+

with the corresponding `default_task` — no `prompt_name` needed.

|

| 155 |

+

|

| 156 |

+

No `dtype`, `device`, `min_pixels`, or custom pooling code needed — sensible defaults live in the model config (bf16 weights, 256–1280 vision tokens).

|

| 157 |

+

|

| 158 |

+

<!-- VIDEO_INPUT_TYPES_DETAILS -->

|

| 159 |

+

<details><summary>Accepted video inputs</summary>

|

| 160 |

+

|

| 161 |

+

Path (`.mp4 .avi .mov .mkv .webm .flv .wmv`, or extensionless — content-sniffed), HTTP(S) URL, `bytes`/`io.BytesIO`, and in-memory `np.ndarray` / `torch.Tensor` of shape `(T, H, W, 3|4)` with dtype `uint8`. In-memory arrays are encoded to MP4 on the fly (needs `pip install imageio imageio-ffmpeg`).

|

| 162 |

+

|

| 163 |

+

```python

|

| 164 |

+

import numpy as np

|

| 165 |

+

# (T, H, W, 3) uint8 — e.g. from decord, imageio, or an rgb frame buffer

|

| 166 |

+

frames = np.zeros((16, 224, 224, 3), dtype=np.uint8)

|

| 167 |

+

v_vec = model.encode(frames)

|

| 168 |

+

```

|

| 169 |

+

|

| 170 |

+

</details>

|

| 171 |

+

|

| 172 |

+

### Via vLLM

|

| 173 |

+

|

| 174 |

+

The base repo holds all 4 task adapters. Pick **one task per vLLM instance** via `hf_overrides`:

|

| 175 |

+

|

| 176 |

+

```python

|

| 177 |

+

from vllm import LLM

|

| 178 |

+

llm = LLM(

|

| 179 |

+

model="jinaai/jina-embeddings-v5-omni-nano",

|

| 180 |

+

runner="pooling",

|

| 181 |

+

trust_remote_code=True,

|

| 182 |

+

hf_overrides={"task": "retrieval"}, # or: classification / clustering / text-matching

|

| 183 |

+

)

|

| 184 |

+

outs = llm.embed([{"prompt": "Which planet is known as the Red Planet?"}])

|

| 185 |

+

```

|

| 186 |

+

|

| 187 |

+

Or via CLI:

|

| 188 |

+

|

| 189 |

+

```bash

|

| 190 |

+

vllm serve jinaai/jina-embeddings-v5-omni-nano \

|

| 191 |

+

--trust-remote-code \

|

| 192 |

+

--hf-overrides '{"task": "retrieval"}'

|

| 193 |

+

```

|

| 194 |

+

|

| 195 |

+

Alternatively set `JINA_V5_TASK=retrieval` in the environment. Output is bit-exact

|

| 196 |

+

with the corresponding pre-merged `-retrieval` / `-classification` / `-clustering` /

|

| 197 |

+

`-text-matching` variant.

|

| 198 |

+

|

| 199 |

+

### Matryoshka (truncating embeddings)

|

| 200 |

+

|

| 201 |

+

All three backends support truncating the full embedding to a shorter dimension

|

| 202 |

+

with L2 re-normalization, so the result stays unit-norm:

|

| 203 |

+

|

| 204 |

+

```python

|

| 205 |

+

# transformers

|

| 206 |

+

vec = model.embed(truncate_dim=256, **proc(text="hello", return_tensors="pt"))

|

| 207 |

+

# or

|

| 208 |

+

vec = model.encode(["hello"], task="retrieval", truncate_dim=256)

|

| 209 |

+

|

| 210 |

+

# sentence-transformers

|

| 211 |

+

vec = model.encode("hello", truncate_dim=256)

|

| 212 |

+

|

| 213 |

+

# vLLM — ask the pooler for a smaller embedding

|

| 214 |

+

from vllm import PoolingParams

|

| 215 |

+

outs = llm.embed(prompts, pooling_params=PoolingParams(dimensions=256))

|

| 216 |

+

# or truncate + renormalize the full-dim output yourself:

|

| 217 |

+

import numpy as np

|

| 218 |

+

full = np.asarray(outs[0].outputs.embedding)

|

| 219 |

+

vec = full[:256] / np.linalg.norm(full[:256])

|

| 220 |

+

```

|

| 221 |

+

|

| 222 |

+

<!-- BATCHING_SECTION_START -->

|

| 223 |

+

### Batching

|

| 224 |

+

|

| 225 |

+

Pass a list to encode many inputs in one call.

|

| 226 |

+

|

| 227 |

+

```python

|

| 228 |

+

# sentence-transformers — any modality

|

| 229 |

+

t_vecs = model.encode(["query 1", "query 2"])

|

| 230 |

+

i_vecs = model.encode([Image.open("a.jpg"), Image.open("b.jpg")])

|

| 231 |

+

v_vecs = model.encode(["clip1.mp4", "clip2.mp4"])

|

| 232 |

+

a_vecs = model.encode(["speech1.wav", "speech2.wav"])

|

| 233 |

+

|

| 234 |

+

# raw transformers — text (native padded batch)

|

| 235 |

+

inputs = proc(text=["query 1", "query 2"], padding=True, truncation=True, return_tensors="pt").to(model.device)

|

| 236 |

+

vecs = model.embed(**inputs) # (2, dim)

|

| 237 |

+

|

| 238 |

+

# vLLM — list of request dicts, any modality

|

| 239 |

+

outs = llm.embed([

|

| 240 |

+

{"prompt": "query 1"},

|

| 241 |

+

{"prompt": "query 2"},

|

| 242 |

+

])

|

| 243 |

+

```

|

| 244 |

+

|

| 245 |

+

For `sentence-transformers`, images / video / audio are forwarded per-sample (one forward pass each). Text is truly batched. For large-scale multimodal throughput, prefer `vLLM`.

|

| 246 |

+

|

| 247 |

+

<!-- BATCHING_SECTION_END -->

|

| 248 |

+

|

| 249 |

+

### Compatibility

|

| 250 |

+

|

| 251 |

+

Embeddings produced by this model share a vector space with:

|

| 252 |

+

- [`jinaai/jina-embeddings-v5-text-nano`](https://huggingface.co/jinaai/jina-embeddings-v5-text-nano) — text-only

|

| 253 |

+

- `jinaai/jina-embeddings-v5-text-nano` (via matching adapter)

|

| 254 |

+

|

| 255 |

+

You can index text with the `v5-text-nano` model and query it with image,

|

| 256 |

+

video, or audio embeddings from `jina-embeddings-v5-omni-nano` — no reindexing.

|

| 257 |

+

|

| 258 |

+

### License

|

| 259 |

+

|

| 260 |

+

CC BY-NC 4.0. For commercial use, [contact us](mailto:sales@jina.ai).

|

adapters/classification/adapter_config.json

ADDED

|

@@ -0,0 +1,39 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"alpha_pattern": {},

|

| 3 |

+

"auto_mapping": null,

|

| 4 |

+

"base_model_name_or_path": "jinaai/jina-embeddings-v5-omni-nano",

|

| 5 |

+

"bias": "none",

|

| 6 |

+

"corda_config": null,

|

| 7 |

+

"eva_config": null,

|

| 8 |

+

"exclude_modules": null,

|

| 9 |

+

"fan_in_fan_out": false,

|

| 10 |

+

"inference_mode": true,

|

| 11 |

+

"init_lora_weights": "gaussian",

|

| 12 |

+

"layer_replication": null,

|

| 13 |

+

"layers_pattern": null,

|

| 14 |

+

"layers_to_transform": null,

|

| 15 |

+

"loftq_config": {},

|

| 16 |

+

"lora_alpha": 32,

|

| 17 |

+

"lora_bias": false,

|

| 18 |

+

"lora_dropout": 0.05,

|

| 19 |

+

"megatron_config": null,

|

| 20 |

+

"megatron_core": "megatron.core",

|

| 21 |

+

"modules_to_save": null,

|

| 22 |

+

"peft_type": "LORA",

|

| 23 |

+

"r": 32,

|

| 24 |

+

"rank_pattern": {},

|

| 25 |

+

"revision": null,

|

| 26 |

+

"target_modules": [

|

| 27 |

+

"o_proj",

|

| 28 |

+

"k_proj",

|

| 29 |

+

"q_proj",

|

| 30 |

+

"down_proj",

|

| 31 |

+

"gate_proj",

|

| 32 |

+

"v_proj",

|

| 33 |

+

"up_proj"

|

| 34 |

+

],

|

| 35 |

+

"task_type": "FEATURE_EXTRACTION",

|

| 36 |

+

"trainable_token_indices": null,

|

| 37 |

+

"use_dora": false,

|

| 38 |

+

"use_rslora": false

|

| 39 |

+

}

|

adapters/classification/adapter_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:13c8389381221af49bbe1d40231f50b28e354c901af57e6b1a1b3a6ec34f42b2

|

| 3 |

+

size 13589512

|

adapters/clustering/adapter_config.json

ADDED

|

@@ -0,0 +1,39 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"alpha_pattern": {},

|

| 3 |

+

"auto_mapping": null,

|

| 4 |

+

"base_model_name_or_path": "jinaai/jina-embeddings-v5-omni-nano",

|

| 5 |

+

"bias": "none",

|

| 6 |

+

"corda_config": null,

|

| 7 |

+

"eva_config": null,

|

| 8 |

+

"exclude_modules": null,

|

| 9 |

+

"fan_in_fan_out": false,

|

| 10 |

+

"inference_mode": true,

|

| 11 |

+

"init_lora_weights": "gaussian",

|

| 12 |

+

"layer_replication": null,

|

| 13 |

+

"layers_pattern": null,

|

| 14 |

+

"layers_to_transform": null,

|

| 15 |

+

"loftq_config": {},

|

| 16 |

+

"lora_alpha": 32,

|

| 17 |

+

"lora_bias": false,

|

| 18 |

+

"lora_dropout": 0.1,

|

| 19 |

+

"megatron_config": null,

|

| 20 |

+

"megatron_core": "megatron.core",

|

| 21 |

+

"modules_to_save": null,

|

| 22 |

+

"peft_type": "LORA",

|

| 23 |

+

"r": 32,

|

| 24 |

+

"rank_pattern": {},

|

| 25 |

+

"revision": null,

|

| 26 |

+

"target_modules": [

|

| 27 |

+

"q_proj",

|

| 28 |

+

"down_proj",

|

| 29 |

+

"gate_proj",

|

| 30 |

+

"v_proj",

|

| 31 |

+

"o_proj",

|

| 32 |

+

"k_proj",

|

| 33 |

+

"up_proj"

|

| 34 |

+

],

|

| 35 |

+

"task_type": "FEATURE_EXTRACTION",

|

| 36 |

+

"trainable_token_indices": null,

|

| 37 |

+

"use_dora": false,

|

| 38 |

+

"use_rslora": false

|

| 39 |

+

}

|

adapters/clustering/adapter_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:030822246d646a74a9886410eddcc80c663384bcaeacd31a869a523f35268c5f

|

| 3 |

+

size 13589512

|

adapters/retrieval/adapter_config.json

ADDED

|

@@ -0,0 +1,39 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"alpha_pattern": {},

|

| 3 |

+

"auto_mapping": null,

|

| 4 |

+

"base_model_name_or_path": "jinaai/jina-embeddings-v5-omni-nano",

|

| 5 |

+

"bias": "none",

|

| 6 |

+

"corda_config": null,

|

| 7 |

+

"eva_config": null,

|

| 8 |

+

"exclude_modules": null,

|

| 9 |

+

"fan_in_fan_out": false,

|

| 10 |

+

"inference_mode": true,

|

| 11 |

+

"init_lora_weights": "gaussian",

|

| 12 |

+

"layer_replication": null,

|

| 13 |

+

"layers_pattern": null,

|

| 14 |

+

"layers_to_transform": null,

|

| 15 |

+

"loftq_config": {},

|

| 16 |

+

"lora_alpha": 32,

|

| 17 |

+

"lora_bias": false,

|

| 18 |

+

"lora_dropout": 0.1,

|

| 19 |

+

"megatron_config": null,

|

| 20 |

+

"megatron_core": "megatron.core",

|

| 21 |

+

"modules_to_save": null,

|

| 22 |

+

"peft_type": "LORA",

|

| 23 |

+

"r": 32,

|

| 24 |

+

"rank_pattern": {},

|

| 25 |

+

"revision": null,

|

| 26 |

+

"target_modules": [

|

| 27 |

+

"gate_proj",

|

| 28 |

+

"v_proj",

|

| 29 |

+

"q_proj",

|

| 30 |

+

"down_proj",

|

| 31 |

+

"o_proj",

|

| 32 |

+

"k_proj",

|

| 33 |

+

"up_proj"

|

| 34 |

+

],

|

| 35 |

+

"task_type": "FEATURE_EXTRACTION",

|

| 36 |

+

"trainable_token_indices": null,

|

| 37 |

+

"use_dora": false,

|

| 38 |

+

"use_rslora": false

|

| 39 |

+

}

|

adapters/retrieval/adapter_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9c2b4bd34101afd04833c5626958724e7587c292ffc6564788cfa10af89a2157

|

| 3 |

+

size 13589512

|

adapters/text-matching/adapter_config.json

ADDED

|

@@ -0,0 +1,39 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"alpha_pattern": {},

|

| 3 |

+

"auto_mapping": null,

|

| 4 |

+

"base_model_name_or_path": "jinaai/jina-embeddings-v5-omni-nano",

|

| 5 |

+

"bias": "none",

|

| 6 |

+

"corda_config": null,

|

| 7 |

+

"eva_config": null,

|

| 8 |

+

"exclude_modules": null,

|

| 9 |

+

"fan_in_fan_out": false,

|

| 10 |

+

"inference_mode": true,

|

| 11 |

+

"init_lora_weights": "gaussian",

|

| 12 |

+

"layer_replication": null,

|

| 13 |

+

"layers_pattern": null,

|

| 14 |

+

"layers_to_transform": null,

|

| 15 |

+

"loftq_config": {},

|

| 16 |

+

"lora_alpha": 32,

|

| 17 |

+

"lora_bias": false,

|

| 18 |

+

"lora_dropout": 0.1,

|

| 19 |

+

"megatron_config": null,

|

| 20 |

+

"megatron_core": "megatron.core",

|

| 21 |

+

"modules_to_save": null,

|

| 22 |

+

"peft_type": "LORA",

|

| 23 |

+

"r": 32,

|

| 24 |

+

"rank_pattern": {},

|

| 25 |

+

"revision": null,

|

| 26 |

+

"target_modules": [

|

| 27 |

+

"k_proj",

|

| 28 |

+

"v_proj",

|

| 29 |

+

"o_proj",

|

| 30 |

+

"down_proj",

|

| 31 |

+

"q_proj",

|

| 32 |

+

"gate_proj",

|

| 33 |

+

"up_proj"

|

| 34 |

+

],

|

| 35 |

+

"task_type": "FEATURE_EXTRACTION",

|

| 36 |

+

"trainable_token_indices": null,

|

| 37 |

+

"use_dora": false,

|

| 38 |

+

"use_rslora": false

|

| 39 |

+

}

|

adapters/text-matching/adapter_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:24bb801dbc7e565e9a64e12ef0049fc2631c381f50a215a83cf90fe0ccba2e0e

|

| 3 |

+

size 13589512

|

architecture.png

ADDED

|

Git LFS Details

|

chat_template.jinja

ADDED

|

@@ -0,0 +1,154 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{%- set image_count = namespace(value=0) %}

|

| 2 |

+

{%- set video_count = namespace(value=0) %}

|

| 3 |

+

{%- macro render_content(content, do_vision_count, is_system_content=false) %}

|

| 4 |

+

{%- if content is string %}

|

| 5 |

+

{{- content }}

|

| 6 |

+

{%- elif content is iterable and content is not mapping %}

|

| 7 |

+

{%- for item in content %}

|

| 8 |

+

{%- if 'image' in item or 'image_url' in item or item.type == 'image' %}

|

| 9 |

+

{%- if is_system_content %}

|

| 10 |

+

{{- raise_exception('System message cannot contain images.') }}

|

| 11 |

+

{%- endif %}

|

| 12 |

+

{%- if do_vision_count %}

|

| 13 |

+

{%- set image_count.value = image_count.value + 1 %}

|

| 14 |

+

{%- endif %}

|

| 15 |

+

{%- if add_vision_id %}

|

| 16 |

+

{{- 'Picture ' ~ image_count.value ~ ': ' }}

|

| 17 |

+

{%- endif %}

|

| 18 |

+

{{- '<|vision_start|><|image_pad|><|vision_end|>' }}

|

| 19 |

+

{%- elif 'video' in item or item.type == 'video' %}

|

| 20 |

+

{%- if is_system_content %}

|

| 21 |

+

{{- raise_exception('System message cannot contain videos.') }}

|

| 22 |

+

{%- endif %}

|

| 23 |

+

{%- if do_vision_count %}

|

| 24 |

+

{%- set video_count.value = video_count.value + 1 %}

|

| 25 |

+

{%- endif %}

|

| 26 |

+

{%- if add_vision_id %}

|

| 27 |

+

{{- 'Video ' ~ video_count.value ~ ': ' }}

|

| 28 |

+

{%- endif %}

|

| 29 |

+

{{- '<|vision_start|><|video_pad|><|vision_end|>' }}

|

| 30 |

+

{%- elif 'text' in item %}

|

| 31 |

+

{{- item.text }}

|

| 32 |

+

{%- else %}

|

| 33 |

+

{{- raise_exception('Unexpected item type in content.') }}

|

| 34 |

+

{%- endif %}

|

| 35 |

+

{%- endfor %}

|

| 36 |

+

{%- elif content is none or content is undefined %}

|

| 37 |

+

{{- '' }}

|

| 38 |

+

{%- else %}

|

| 39 |

+

{{- raise_exception('Unexpected content type.') }}

|

| 40 |

+

{%- endif %}

|

| 41 |

+

{%- endmacro %}

|

| 42 |

+

{%- if not messages %}

|

| 43 |

+

{{- raise_exception('No messages provided.') }}

|

| 44 |

+

{%- endif %}

|

| 45 |

+

{%- if tools and tools is iterable and tools is not mapping %}

|

| 46 |

+

{{- '<|im_start|>system\n' }}

|

| 47 |

+

{{- "# Tools\n\nYou have access to the following functions:\n\n<tools>" }}

|

| 48 |

+

{%- for tool in tools %}

|

| 49 |

+

{{- "\n" }}

|

| 50 |

+

{{- tool | tojson }}

|

| 51 |

+

{%- endfor %}

|

| 52 |

+

{{- "\n</tools>" }}

|

| 53 |

+

{{- '\n\nIf you choose to call a function ONLY reply in the following format with NO suffix:\n\n<tool_call>\n<function=example_function_name>\n<parameter=example_parameter_1>\nvalue_1\n</parameter>\n<parameter=example_parameter_2>\nThis is the value for the second parameter\nthat can span\nmultiple lines\n</parameter>\n</function>\n</tool_call>\n\n<IMPORTANT>\nReminder:\n- Function calls MUST follow the specified format: an inner <function=...></function> block must be nested within <tool_call></tool_call> XML tags\n- Required parameters MUST be specified\n- You may provide optional reasoning for your function call in natural language BEFORE the function call, but NOT after\n- If there is no function call available, answer the question like normal with your current knowledge and do not tell the user about function calls\n</IMPORTANT>' }}

|

| 54 |

+

{%- if messages[0].role == 'system' %}

|

| 55 |

+

{%- set content = render_content(messages[0].content, false, true)|trim %}

|

| 56 |

+

{%- if content %}

|

| 57 |

+

{{- '\n\n' + content }}

|

| 58 |

+

{%- endif %}

|

| 59 |

+

{%- endif %}

|

| 60 |

+

{{- '<|im_end|>\n' }}

|

| 61 |

+

{%- else %}

|

| 62 |

+

{%- if messages[0].role == 'system' %}

|

| 63 |

+

{%- set content = render_content(messages[0].content, false, true)|trim %}

|

| 64 |

+

{{- '<|im_start|>system\n' + content + '<|im_end|>\n' }}

|

| 65 |

+

{%- endif %}

|

| 66 |

+

{%- endif %}

|

| 67 |

+

{%- set ns = namespace(multi_step_tool=true, last_query_index=messages|length - 1) %}

|

| 68 |

+

{%- for message in messages[::-1] %}

|

| 69 |

+

{%- set index = (messages|length - 1) - loop.index0 %}

|

| 70 |

+

{%- if ns.multi_step_tool and message.role == "user" %}

|

| 71 |

+

{%- set content = render_content(message.content, false)|trim %}

|

| 72 |

+

{%- if not(content.startswith('<tool_response>') and content.endswith('</tool_response>')) %}

|

| 73 |

+

{%- set ns.multi_step_tool = false %}

|

| 74 |

+

{%- set ns.last_query_index = index %}

|

| 75 |

+

{%- endif %}

|

| 76 |

+

{%- endif %}

|

| 77 |

+

{%- endfor %}

|

| 78 |

+

{%- if ns.multi_step_tool %}

|

| 79 |

+

{{- raise_exception('No user query found in messages.') }}

|

| 80 |

+

{%- endif %}

|

| 81 |

+

{%- for message in messages %}

|

| 82 |

+

{%- set content = render_content(message.content, true)|trim %}

|

| 83 |

+

{%- if message.role == "system" %}

|

| 84 |

+

{%- if not loop.first %}

|

| 85 |

+

{{- raise_exception('System message must be at the beginning.') }}

|

| 86 |

+

{%- endif %}

|

| 87 |

+

{%- elif message.role == "user" %}

|

| 88 |

+

{{- '<|im_start|>' + message.role + '\n' + content + '<|im_end|>' + '\n' }}

|

| 89 |

+

{%- elif message.role == "assistant" %}

|

| 90 |

+

{%- set reasoning_content = '' %}

|

| 91 |

+

{%- if message.reasoning_content is string %}

|

| 92 |

+

{%- set reasoning_content = message.reasoning_content %}

|

| 93 |

+

{%- else %}

|

| 94 |

+

{%- if '</think>' in content %}

|

| 95 |

+

{%- set reasoning_content = content.split('</think>')[0].rstrip('\n').split('<think>')[-1].lstrip('\n') %}

|

| 96 |

+

{%- set content = content.split('</think>')[-1].lstrip('\n') %}

|

| 97 |

+

{%- endif %}

|

| 98 |

+

{%- endif %}

|

| 99 |

+

{%- set reasoning_content = reasoning_content|trim %}

|

| 100 |

+

{%- if loop.index0 > ns.last_query_index %}

|

| 101 |

+

{{- '<|im_start|>' + message.role + '\n<think>\n' + reasoning_content + '\n</think>\n\n' + content }}

|

| 102 |

+

{%- else %}

|

| 103 |

+

{{- '<|im_start|>' + message.role + '\n' + content }}

|

| 104 |

+

{%- endif %}

|

| 105 |

+

{%- if message.tool_calls and message.tool_calls is iterable and message.tool_calls is not mapping %}

|

| 106 |

+

{%- for tool_call in message.tool_calls %}

|

| 107 |

+

{%- if tool_call.function is defined %}

|

| 108 |

+

{%- set tool_call = tool_call.function %}

|

| 109 |

+

{%- endif %}

|

| 110 |

+

{%- if loop.first %}

|

| 111 |

+

{%- if content|trim %}

|

| 112 |

+

{{- '\n\n<tool_call>\n<function=' + tool_call.name + '>\n' }}

|

| 113 |

+

{%- else %}

|

| 114 |

+

{{- '<tool_call>\n<function=' + tool_call.name + '>\n' }}

|

| 115 |

+

{%- endif %}

|

| 116 |

+

{%- else %}

|

| 117 |

+

{{- '\n<tool_call>\n<function=' + tool_call.name + '>\n' }}

|

| 118 |

+

{%- endif %}

|

| 119 |

+

{%- if tool_call.arguments is defined %}

|

| 120 |

+

{%- for args_name, args_value in tool_call.arguments|items %}

|

| 121 |

+

{{- '<parameter=' + args_name + '>\n' }}

|

| 122 |

+

{%- set args_value = args_value | tojson | safe if args_value is mapping or (args_value is sequence and args_value is not string) else args_value | string %}

|

| 123 |

+

{{- args_value }}

|

| 124 |

+

{{- '\n</parameter>\n' }}

|

| 125 |

+

{%- endfor %}

|

| 126 |

+

{%- endif %}

|

| 127 |

+

{{- '</function>\n</tool_call>' }}

|

| 128 |

+

{%- endfor %}

|

| 129 |

+

{%- endif %}

|

| 130 |

+

{{- '<|im_end|>\n' }}

|

| 131 |

+

{%- elif message.role == "tool" %}

|

| 132 |

+

{%- if loop.previtem and loop.previtem.role != "tool" %}

|

| 133 |

+

{{- '<|im_start|>user' }}

|

| 134 |

+

{%- endif %}

|

| 135 |

+

{{- '\n<tool_response>\n' }}

|

| 136 |

+

{{- content }}

|

| 137 |

+

{{- '\n</tool_response>' }}

|

| 138 |

+

{%- if not loop.last and loop.nextitem.role != "tool" %}

|

| 139 |

+

{{- '<|im_end|>\n' }}

|

| 140 |

+

{%- elif loop.last %}

|

| 141 |

+

{{- '<|im_end|>\n' }}

|

| 142 |

+

{%- endif %}

|

| 143 |

+

{%- else %}

|

| 144 |

+

{{- raise_exception('Unexpected message role.') }}

|

| 145 |

+

{%- endif %}

|

| 146 |

+

{%- endfor %}

|

| 147 |

+

{%- if add_generation_prompt %}

|

| 148 |

+

{{- '<|im_start|>assistant\n' }}

|

| 149 |

+

{%- if enable_thinking is defined and enable_thinking is true %}

|

| 150 |

+

{{- '<think>\n' }}

|

| 151 |

+

{%- else %}

|

| 152 |

+

{{- '<think>\n\n</think>\n\n' }}

|

| 153 |

+

{%- endif %}

|

| 154 |

+

{%- endif %}

|

config.json

ADDED

|

@@ -0,0 +1,101 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"JinaEmbeddingsV5OmniModel"

|

| 4 |

+

],

|

| 5 |

+

"auto_map": {

|

| 6 |

+

"AutoConfig": "modeling_jina_embeddings_v5_omni.JinaEmbeddingsV5OmniConfig",

|

| 7 |

+

"AutoModel": "modeling_jina_embeddings_v5_omni.JinaEmbeddingsV5OmniModel"

|

| 8 |

+

},

|

| 9 |

+

"model_type": "jina_embeddings_v5_omni",

|

| 10 |

+

"task_names": [

|

| 11 |

+

"retrieval",

|

| 12 |

+

"text-matching",

|

| 13 |

+

"clustering",

|

| 14 |

+

"classification"

|

| 15 |

+

],

|

| 16 |

+

"special_token_ids": [

|

| 17 |

+

128256,

|

| 18 |

+

128257,

|

| 19 |

+

128258,

|

| 20 |

+

128259

|

| 21 |

+

],

|

| 22 |

+

"vision_config": {

|

| 23 |

+

"deepstack_visual_indexes": [],

|

| 24 |

+

"depth": 12,

|

| 25 |

+

"dtype": "bfloat16",

|

| 26 |

+

"hidden_act": "gelu_pytorch_tanh",

|

| 27 |

+

"hidden_size": 768,

|

| 28 |

+

"in_channels": 3,

|

| 29 |

+

"initializer_range": 0.02,

|

| 30 |

+

"intermediate_size": 3072,

|

| 31 |

+

"model_type": "",

|

| 32 |

+

"num_heads": 12,

|

| 33 |

+

"num_position_embeddings": 2304,

|

| 34 |

+

"out_hidden_size": 1024,

|

| 35 |

+

"patch_size": 16,

|

| 36 |

+

"spatial_merge_size": 2,

|

| 37 |

+

"temporal_patch_size": 2

|

| 38 |

+

},

|

| 39 |

+

"text_config": {

|

| 40 |

+

"attention_bias": false,

|

| 41 |

+

"attention_dropout": 0.0,

|

| 42 |

+

"bos_token_id": 1,

|

| 43 |

+

"eos_token_id": 2,

|

| 44 |

+

"head_dim": 64,

|

| 45 |

+

"hidden_act": "silu",

|

| 46 |

+

"hidden_size": 768,

|

| 47 |

+

"initializer_range": 0.02,

|

| 48 |

+

"intermediate_size": 3072,

|

| 49 |

+

"is_causal": false,

|

| 50 |

+

"max_position_embeddings": 8192,

|

| 51 |

+

"mlp_bias": false,

|

| 52 |

+

"model_type": "",

|

| 53 |

+

"num_attention_heads": 12,

|

| 54 |

+

"num_hidden_layers": 12,

|

| 55 |

+

"num_key_value_heads": 12,

|

| 56 |

+

"pad_token_id": null,

|

| 57 |

+

"pretraining_tp": 1,

|

| 58 |

+

"rms_norm_eps": 1e-05,

|

| 59 |

+

"rope_parameters": {

|

| 60 |

+

"rope_theta": 1000000.0,

|

| 61 |

+

"rope_type": "default"

|

| 62 |

+

},

|

| 63 |

+

"tie_word_embeddings": false,

|

| 64 |

+

"vocab_size": 128260

|

| 65 |

+

},

|

| 66 |

+

"audio_config": {

|

| 67 |

+

"activation_dropout": 0.0,

|

| 68 |

+

"activation_function": "gelu",

|

| 69 |

+

"attention_dropout": 0.0,

|

| 70 |

+

"d_model": 1280,

|

| 71 |

+

"dropout": 0.0,

|

| 72 |

+

"dtype": "float32",

|

| 73 |

+

"encoder_attention_heads": 20,

|

| 74 |

+

"encoder_ffn_dim": 5120,

|

| 75 |

+

"encoder_layers": 32,

|

| 76 |

+

"initializer_range": 0.02,

|

| 77 |

+

"max_source_positions": 1500,

|

| 78 |

+

"num_mel_bins": 128,

|

| 79 |

+

"scale_embedding": false,

|

| 80 |

+

"n_window": 100,

|

| 81 |

+

"output_dim": 3584

|

| 82 |

+

},

|

| 83 |

+

"image_token_index": 128259,

|

| 84 |

+

"audio_token_id": 128256,

|

| 85 |

+

"audio_start_token_id": 128257,

|

| 86 |

+

"audio_end_token_id": 128258,

|

| 87 |

+

"projector_hidden_act": "gelu",

|

| 88 |

+

"tie_word_embeddings": false,

|

| 89 |

+

"dtype": "bfloat16",

|

| 90 |

+

"transformers_version": "5.4.0",

|

| 91 |

+

"torch_dtype": "bfloat16",

|

| 92 |

+

"is_matryoshka": true,

|

| 93 |

+

"matryoshka_dimensions": [

|

| 94 |

+

32,

|

| 95 |

+

64,

|

| 96 |

+

128,

|

| 97 |

+

256,

|

| 98 |

+

512,

|

| 99 |

+

768

|

| 100 |

+

]

|

| 101 |

+

}

|

config_sentence_transformers.json

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"prompts": {

|

| 3 |

+

"query": "Query: ",

|

| 4 |

+

"document": "Document: "

|

| 5 |

+

},

|

| 6 |

+

"default_prompt_name": null,

|

| 7 |

+

"similarity_fn_name": "cosine"

|

| 8 |

+

}

|

custom_st.py

ADDED

|

@@ -0,0 +1,990 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|